The Product process at Ontruck

This article was originally posted in the Ontruck Product blog.

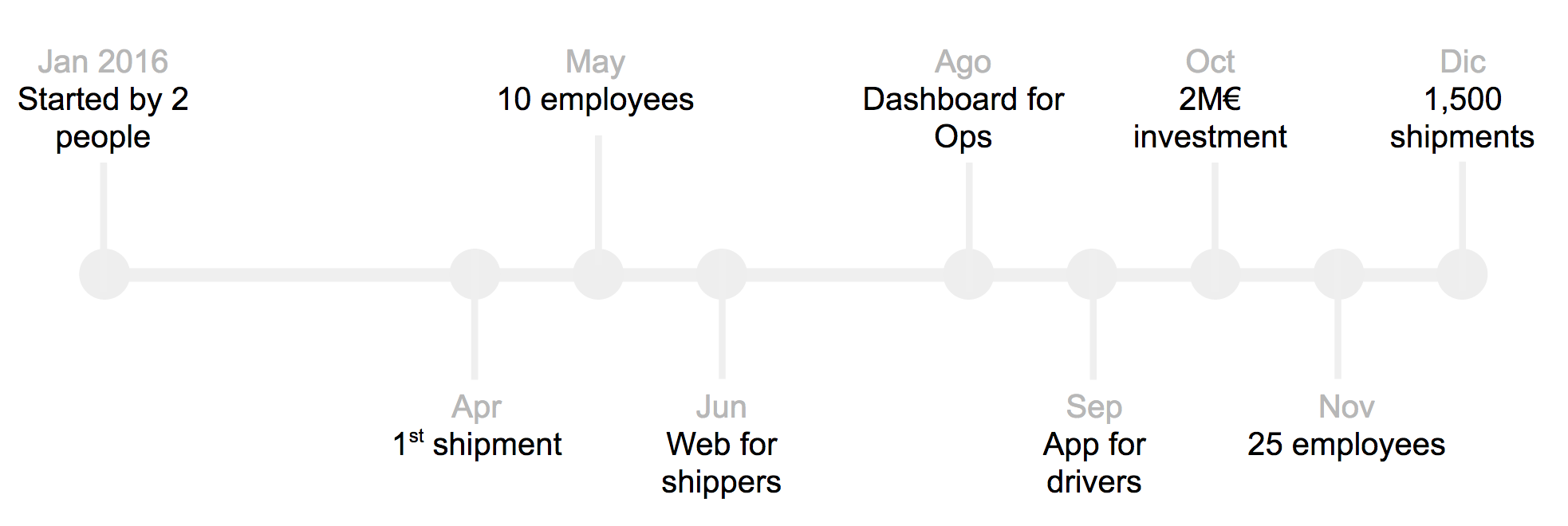

Ontruck is one year old and we are really proud of the progress we have achieved so far. We have transformed an idea of improving the logistic sector into a reality which is improving the day-to-day life of hundreds of companies and drivers.

We are sharing our product process in order to get feedback and improve it, help onboard new employees, attract new talent and help the startup ecosystem. So please, if you have any question or suggestion, don’t hesitate to comment!

What’s Ontruck

Ontruck is transforming the road transportation industry — €600+bn in Europe. We are building the leading logistics network for B2B, on-demand road transport. We make trucking simple, transparent and on-demand. We are backed by the top seed investors in Europe (UK, Germany and Spain). Our team is rapidly growing and made up of the best in Tech, Product, Logistics and Operations.

What we have achieved this year

We have built and iterated over the first versions of our products offering shippers a web platform to make the process of finding the right truck quick and simple. Carriers are able to accept shipments through a mobile app, letting them grow and manage their business with ease.

We have hired a team of over 30 senior employees from different nationalities (Spain, Colombia, Venezuela, Australia, UK, Indonesia). Every one of us has had several start-up experiences and on average we have 10+ years of experience in somehow similar challenges. We know success, we know failure; we’ve built platforms from scratch, we’ve dealt with large legacy systems. We care about each other and about the product we’re building.

Intuition doesn’t work at Ontruck

One of the main differences of Ontruck versus other startups is that we don’t use our own products. We don’t drive trucks, we don’t have factories and we don’t ship pallets. This forces us to be humble and listen to our customers, because that’s all that we have.

This potential disadvantage is also an advantage on its own because it forces us to build the product our users really need, not the product we think they want based on our own intuition or previous experiences.

In order to do that we have developed a product process we follow on every new feature or project. We have iterated over this process for many months and it has been used by both senior and junior people. This process has eight steps.

1. Understand

Feature requirements can come from many people, with different level of specification and with/out a solution proposal. However, we always start understanding the problem. At Ontruck we care about problems, not about solutions. Solutions are biased by the person who proposes it and they can mean different things on each of us. However, problems are easier to share and discuss.

We first focus on the job to be done. We shadow or interview our Ops and Sales colleagues to understand their needs and what they hear from clients. Then we visit or call clients; this is really useful to get ton of information, not just about the problem in hand but about the full experience with Ontruck.

After these, we scope the problem we want to solve. This is one of the first feature reductions we do in order to be as lean as possible. Then, within all the squad we share the concerns of potential problems we need to clarify.

Example: Multi-user functionality

Sales sent us an email with product needs from a shipper. They said something like “they need an Admin apart from the individual accounts”.

On a traditional scenario, we would have built a proper role and permission structure to allow clients to manage themselves. However, as we focused on the problem and we interviewed several clients, we discovered that they didn’t need any admin nor permissions. They just needed to see a full list of shipments and receive notifications from just their own.

2. Diverge

Once we know clearly what the problem is, our mind starts to think on the obvious solution. However, we try to avoid that line of thought and instead, we practice different techniques to diverge and get many ideas to solve that problem.

Crazy Eights are useful to brainstorm crazy ideas, to reduce the problem to just a small piece of paper and a few seconds. Map minds are required for more complicated projects where you need to group ideas. Card sortings are interesting to analyze the priority of data on a screen. Flows are useful to diverge on user interactions.

We also look at examples from other websites and apps, mostly from top consumer and SaaS companies, in order to get interesting ways of simplifying the product.

Example: Do we really need to build a mobile app?

It was around March 10 when we decided we wanted to start selling shipments on April 1. We were going to accept shipment orders by email or phone but we wanted to validate that drivers were going to accept shipments with their phone in just a few minutes.

The solution was clearly to build a basic app for the drivers. Would we do it natively on iOS and Android? Would we do it cross-platform? Would we do it on a mobile website? Those were our questions. However those were tech questions, not product questions.

What did we want to validate? It was just that we were able to send a shipment offer to many drivers and one of them answered quickly. Suddenly, a crazy solution came. Let’s use a Telegram channel! It took less than one hour to setup, it worked for all kind of phones and we could iterate messages several times per day. This crazy solution has allowed us to bill thousands of euros.

3. Decide

After experimenting and bouncing ideas, we decide what we need to do. This is the second moment of the product process when we reduce the scope of the project. We are always looking for ways to reduce the work we need to do. In order to achieve this decision, we groom the research with tech, qa and ops/sales/marketing.

The output of this meetings is usually a plan to phase the project on several steps. We start with the minimum value we need to add and we delay other interesting use cases for later. If we don’t need to design or code, much better.

On this step, it’s very important to draw a flow of the interactions because it makes everyone think so we don’t forget a scenario. We start with paper or whiteboard and we transfer it to Draw.io.

This is the step when you should decide the KPIs you are going to improve. You are going to use them to measure the success of the project after deployment.

Example: Do we really need a dashboard?

We receive quotes constantly and Sales wanted to act on those. The first thought, from a business person, is to build a dashboard. After some talks, we realized we didn’t know which was the info that was most needed; so building something could be a waste of time.

We agreed that Sales just needed to see the quotes on real-time for now until they figure out how could they be more useful. For that we didn’t need to build anything. We just connected the event quote from Segment to a channel in Slack. This solution took 5 minutes and has helped us close more deals.

4. Proto

Only after we have reduced the scope and we have phased the project, we start drawing. It’s sad how most companies start on this phase. For us this step is one of the least important ones. Our focus at this moment is to build a clear user experience reducing the cognitive load for our users and making the interface responsive to all devices.

We start on paper with marking pens thinking on the components we will use. We follow the flow we previously built to make sure we cover all cases. Once we are happy with it, we start working on Sketch and building the flows on Marvel. We meet with the tech members of the squad to collect their feedback and make sure the solution is easy to implement and makes sense to them.

On special occasions we ask tech to help us with a pseudo-build. This is only required if we felt we really need it for the user testing.

5. User Testing

This is another step that few companies do. We are humble so we don’t know if our proposed solution is going to work. That’s why we test almost all features with our internal and external clients.

We prepare a Marvel flow and go visit clients and drivers. Apart from the feedback we get from them, these visits are very useful to get more feedback about our company. Before going we study their previous interactions with us, specially looking for incidents that may have happened. Each time we go, we come back with lots of learnings; both from the new feature we were testing and from other scenarios.

6. Build

Once we feel comfortable with the interactions and the designs, we do a grooming with Tech to fully discuss the project and answer all questions they may still have. As they have been part of the product process since the beginning, there are usually few questions left and all design cases are covered.

Tech then meets and they create and estimate the job stories for this project. We use the fibonacci scale and we try to use the jobs to be done philosophy. We still have a lot of work to do on this. For example, this is the step where Tech will also add the acceptance criteria to each story. We now have a QA Engineer, but we have a QA Assistance approach; so every tech and product person is responsable for the quality of the project.

On the next planning we add the stories highlighting those which can be blockers. The squad is responsable for unblocking themselves.

7. Launch

After the project has properly tested and validated by product, we are ready to launch it. However, before doing it, we do a demo in front of all the company. We have two objectives: explain the new feature to ops/sales/marketing and celebrate what we built between all.

One of the particularities of our demo day is that the demo is done by several random ops/sales/marketing employees. They don’t know about the features, we just ask them to do a job and they have to do it. The funny thing is that this is a real test to check if we have done a great work on product. Many times we detect small things we need to change before deploying.

This demo is not sufficient to teach the features to ops/sales/marketing. We do specific sessions about them and we also provide a document that explains it to them; making an emphasis on the extreme cases and what we do and we don’t cover.

8. Measure

On the days/weeks after launching we follow the progress of the feature with two approaches:

- Qualitative — We check the use on Inspectlet, we gather inbound and outbound user feedback and we do shadowing to our colleagues and clients.

- Quantitative — We analyze the data using Amplitude and we review the marketing funnels.

If the feedback is not positive, we consider an iteration for following sprints.

Example: Features that clients don’t use

We had built an optional feature for companies that gave them more flexibility; however after some weeks it wasn’t being used. We reviewed the use of it and we realized that some clients didn’t know about it and others didn’t care too much about it because they were comfortable with the default option.

We did some calls, we analyzed the data and we decided to enable it to 20 clients to see how they react. The conclusion is that we had been extremely cautious and nobody was having a problem with it, so we could in fact make it the only option. Based on this feedback we removed options and we just left one.